You can not simply publicly access private secure links, can you?

turns out, you can even search for them with powerful search engines!

Summary

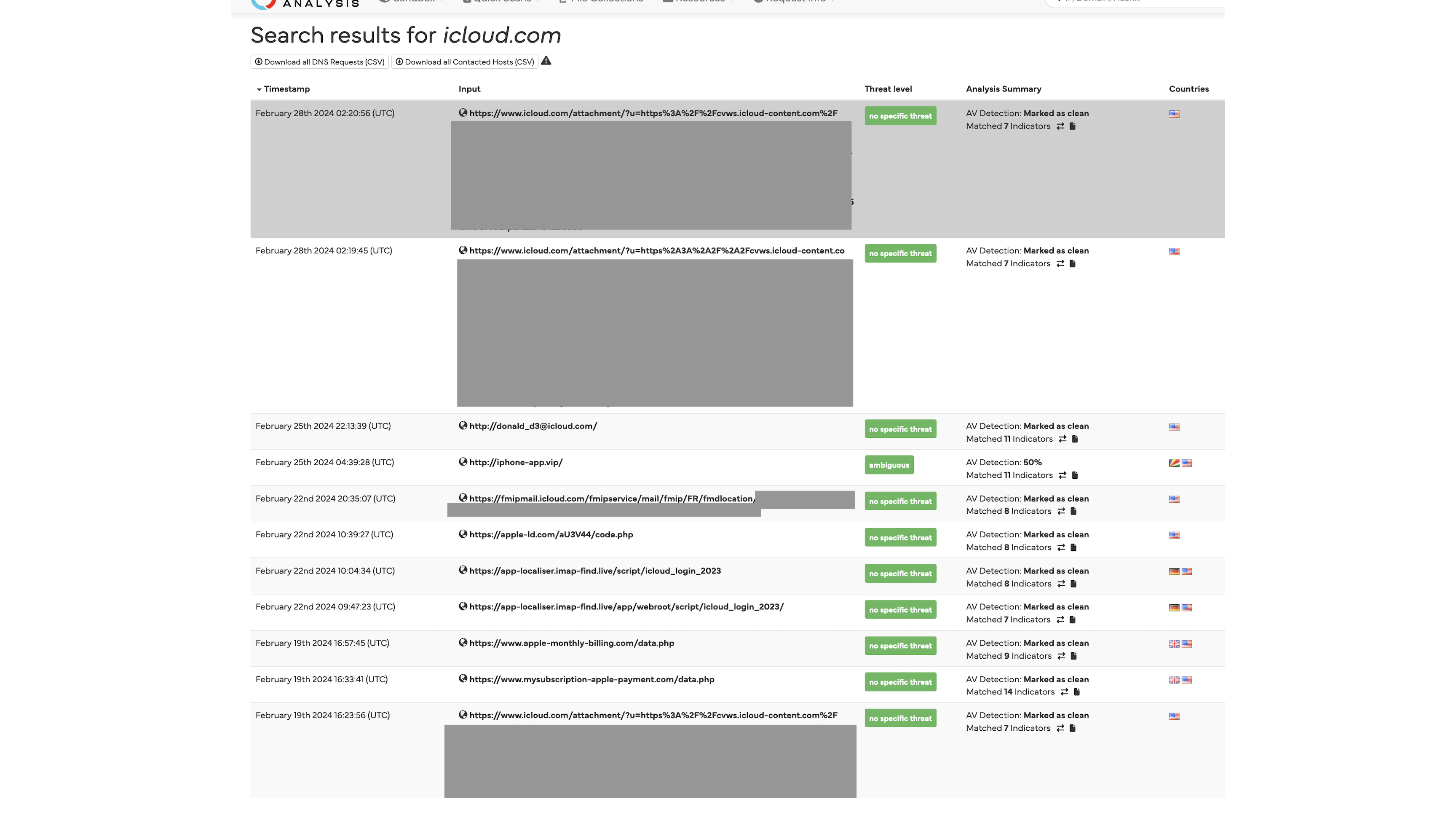

Popular malware/url analysis tools like urlscan.io, Hybrid Analysis and Cloudflare radar url scanner store a large number of links for intelligence gathering and sharing. It is however not so widely known that these services also store a large amount of private and sensitive links, thanks to:

- Sensitive links mistakenly submitted by users for scanning unaware that these are public information

- Misconfigured scanners and extensions submitting private links scanned from emails as public data

So what are all these links you refer to?

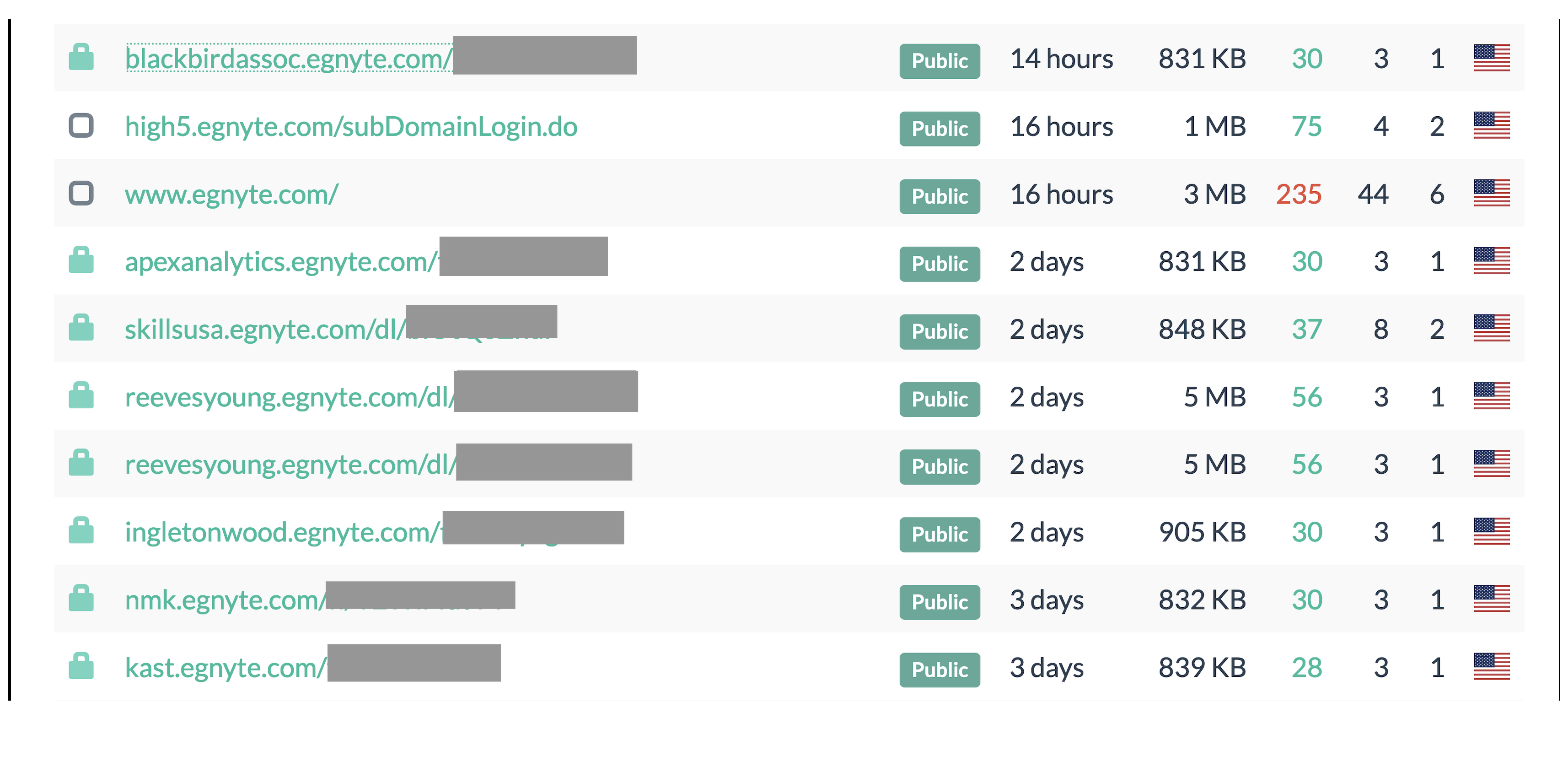

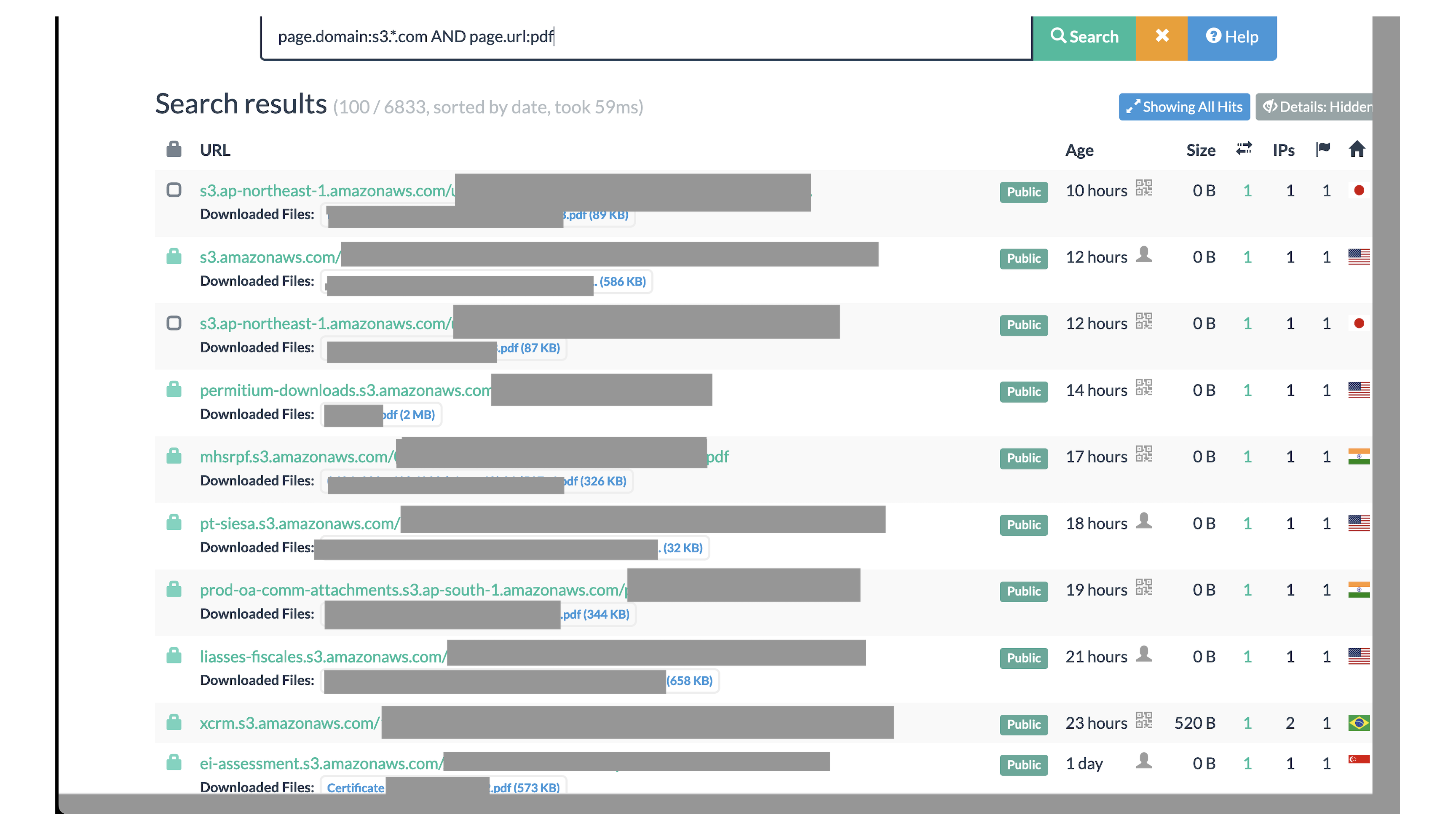

- Files shared using cloud storage tools (e.g.

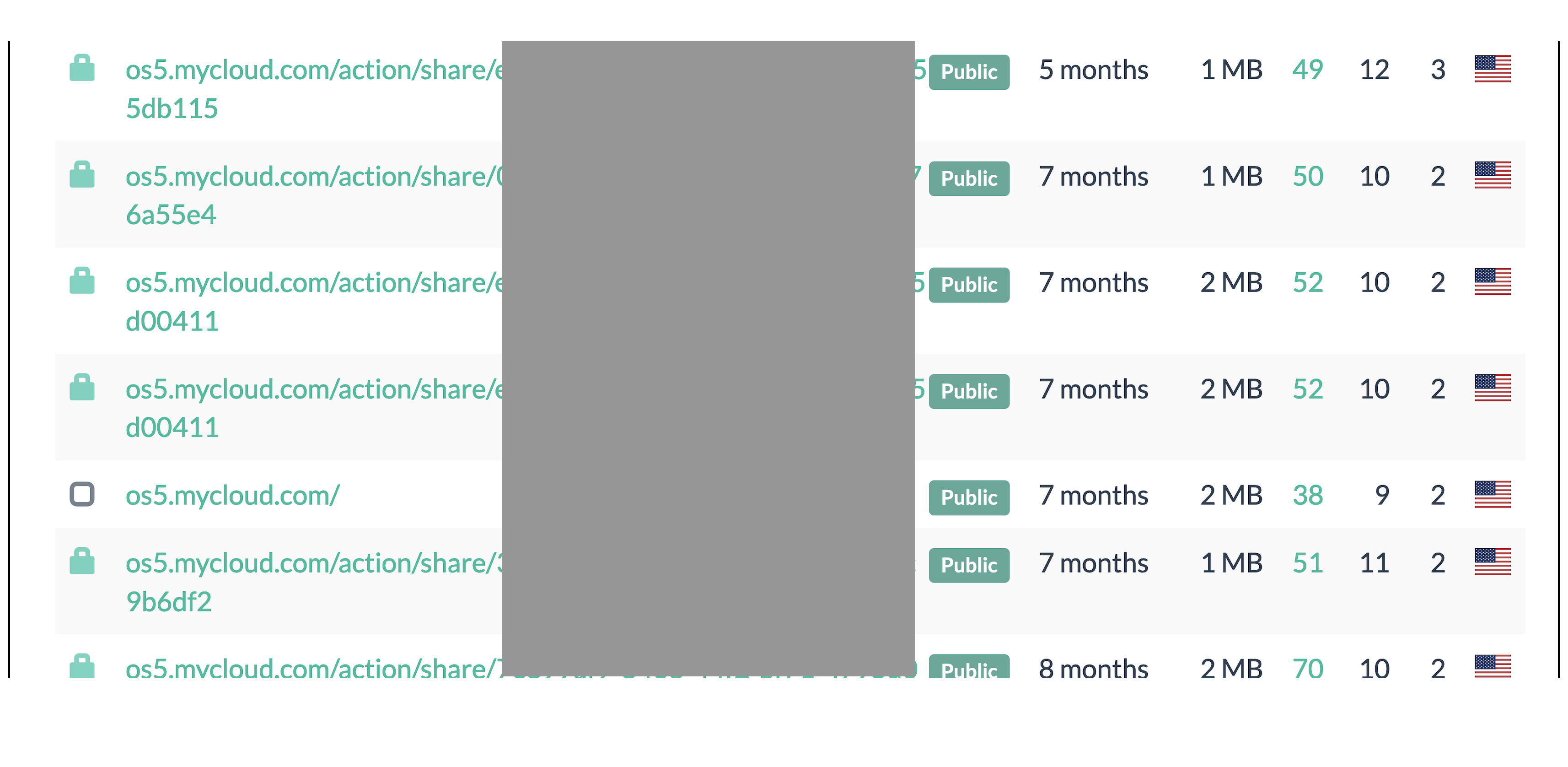

Dropbox,iCLoud,Sync,Egnyte,Ionos Hidrive,AWS S3) - Cloud connected NAS tools (e.g.

Western Digital Mycloud) - Corporate communication (e.g.

Slido,Zoom,Onedrive,Airtable) - Password reset links, Oauth sign-in links

All these have one thing in common, the way they are so widely used allows anyone to access their services using a single private link containing random identifiers to ensure security of the links. Sometimes, they can be protected further using a password or passphrase, in those cases just having access to the links does not result in data exposure.

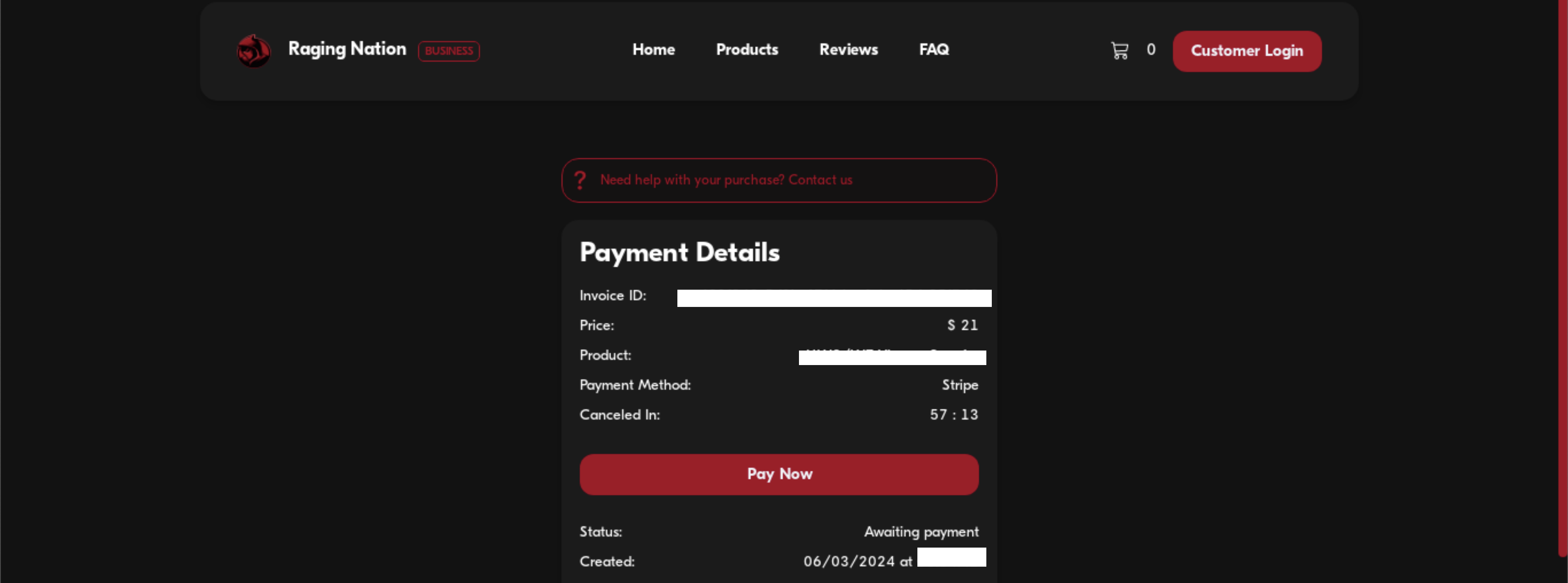

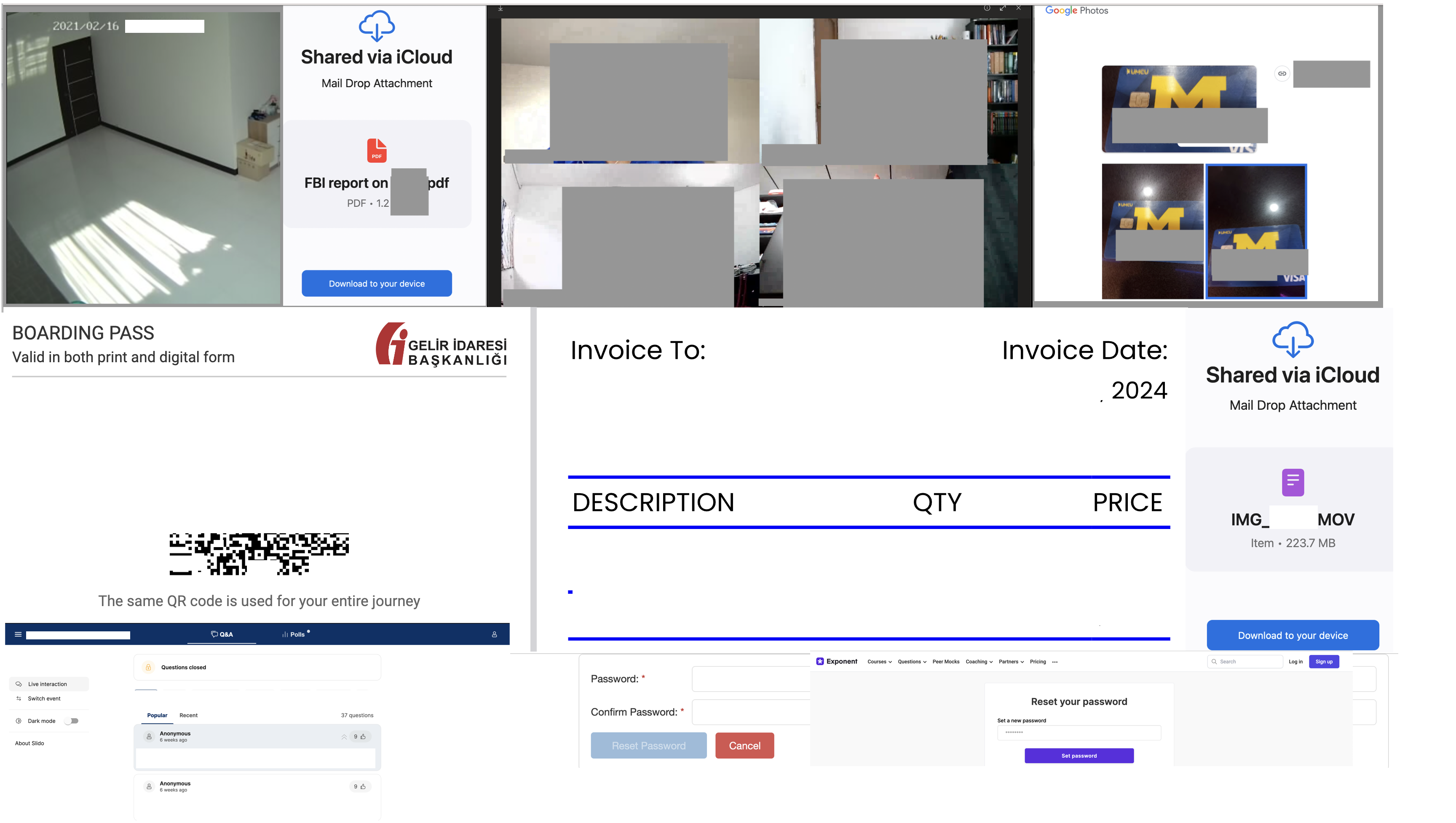

Some screenshots I grabbed from urlscan.io before they were filtered out after I reached out to them (they were quick to respond):

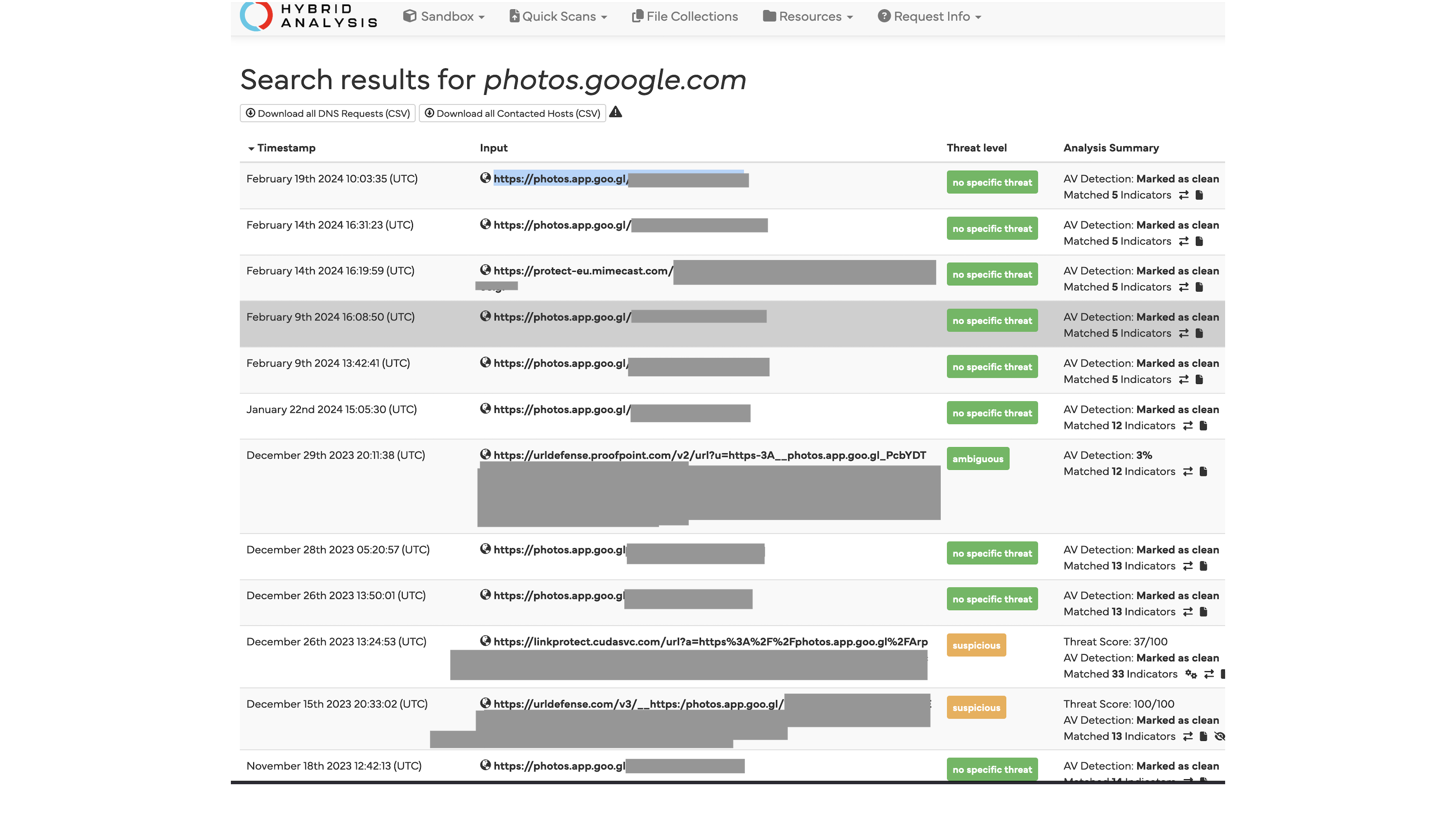

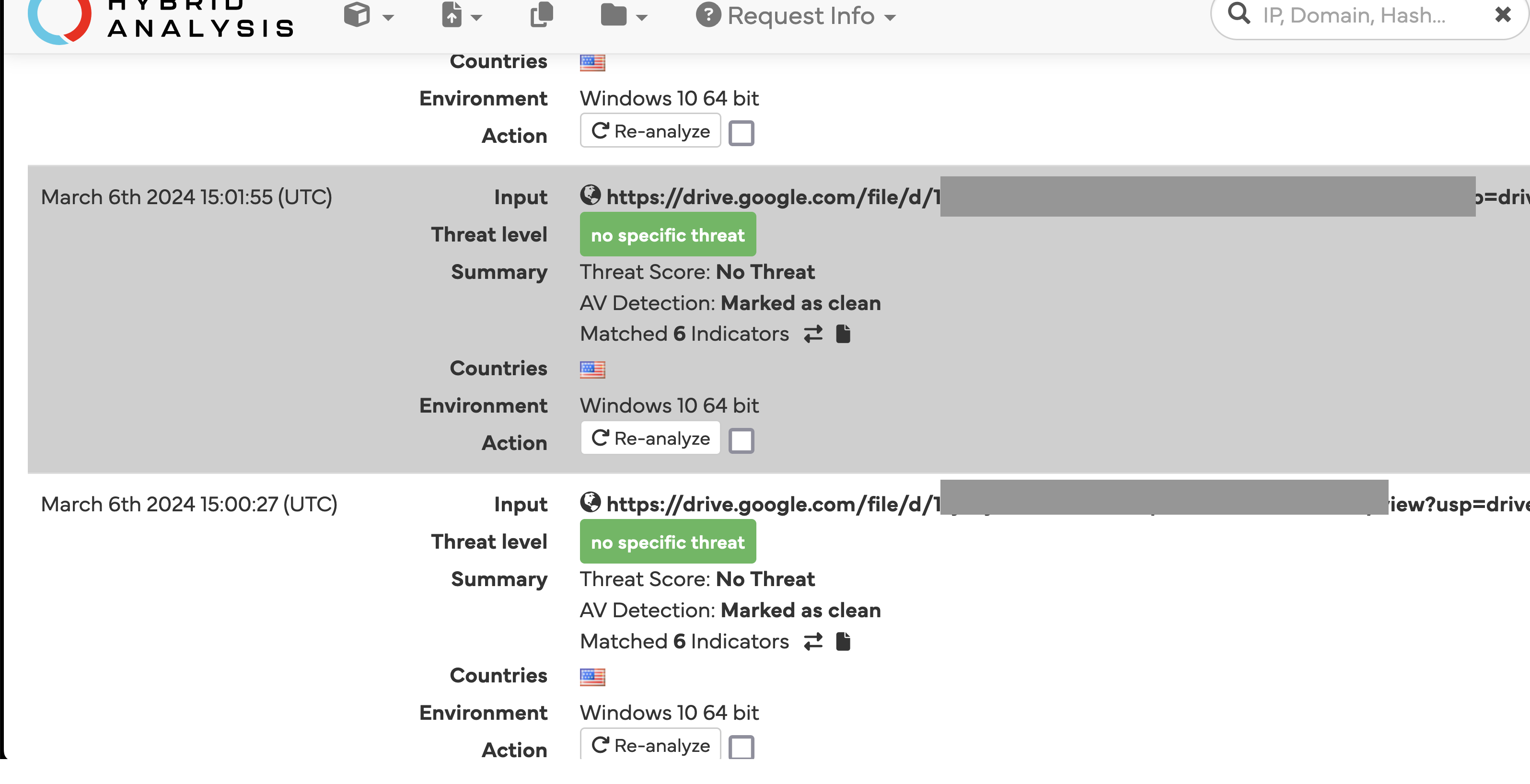

A lot of these submissions came from falconsandbox as shown in tags by urlscan.io, so I broadened my analysis to include Hybrid Analysis (owned by Crowdstrike) as well.

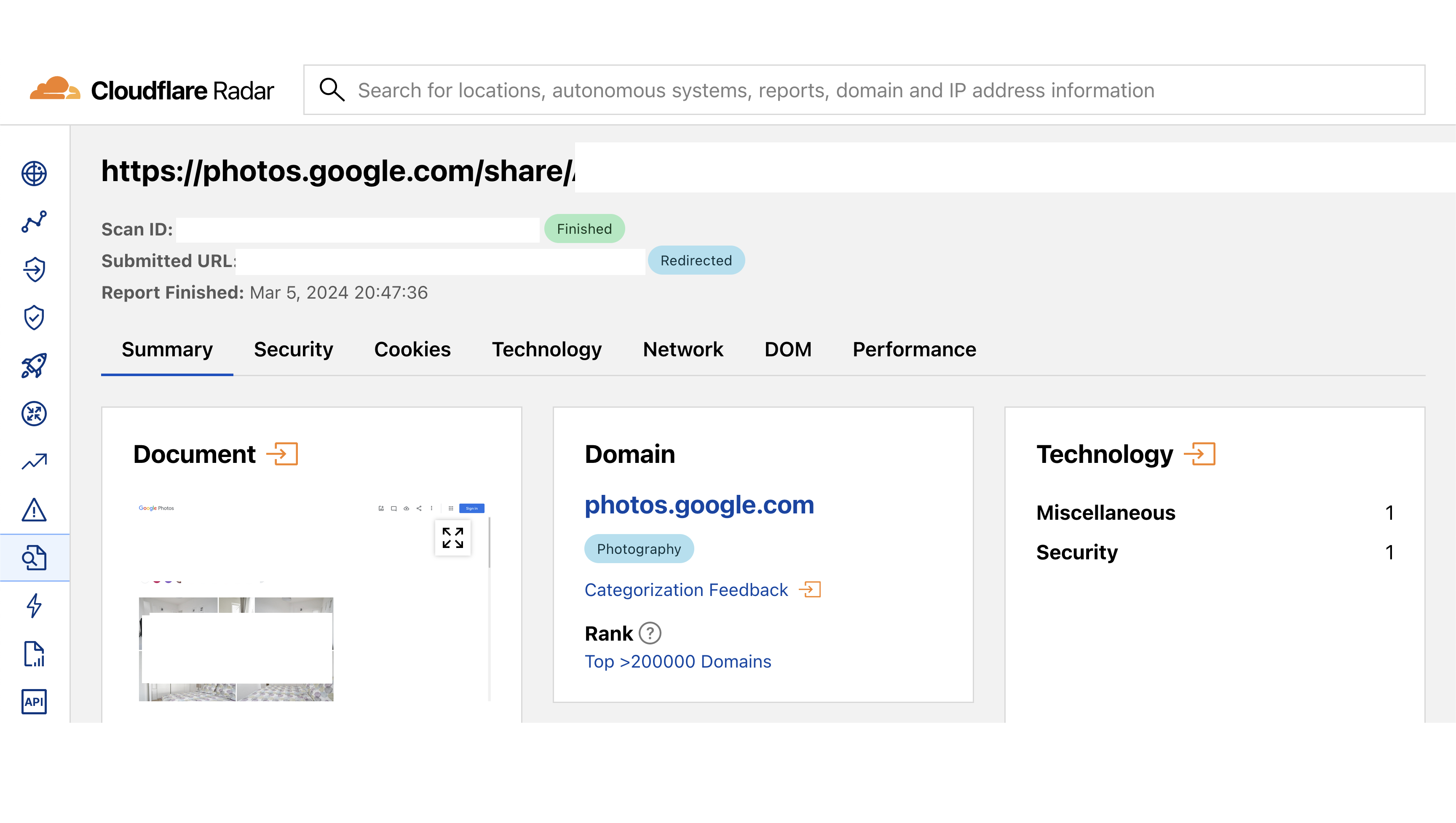

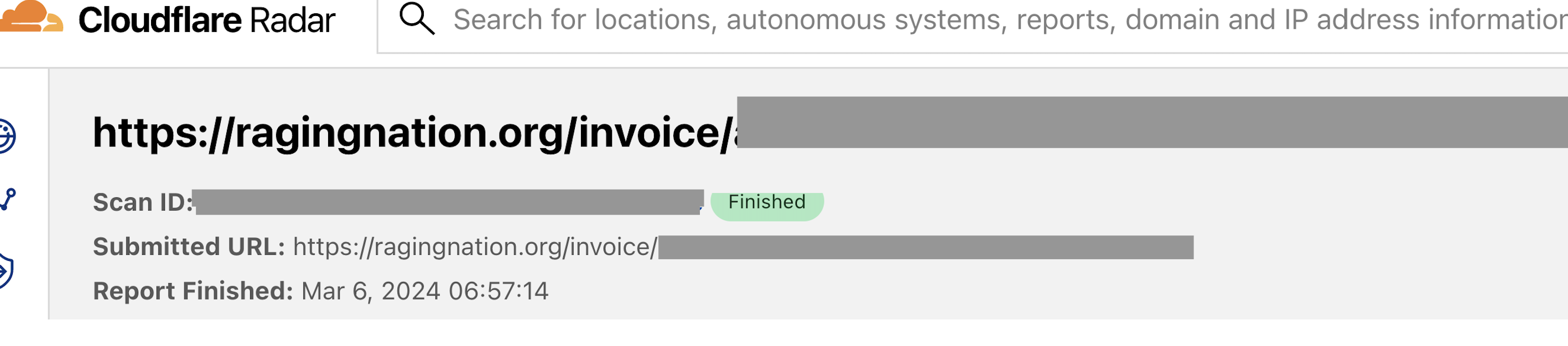

Another new tool with potential to become more widely used and already containing some private links as public data is Cloudflare Radar.

Some broad categories of sensitive content I came across:

- Private files including tax documents, invoices, photos, business communications

- Shared secrets using onetimesecret

- Smart home device recordings

- Meeting recordings stored in the cloud

Who is responsible?

Now that is a tough one to answer. From terms and conditions of use from Hybrid Analysis:

Hybrid Analysis analyses, publishes, and shares Submitted Content from users as part of providing a cybersecurity community resource and is not responsible for the content or information which may incidentally appear in such submissions or be included in automatically-generated reports.

From urlscan.io:

You specifically acknowledge that urlscan shall not be liable for any user content or conduct.

You are responsible for all content posted and activity that occurs under your account.

As such, there does not seem to be any mechanism in place to review the existing content and flag/remove potentially sensitive links. Implementing it in an automated fashion might also not be trivial.

As a security researcher, it is also hard to figure out the source of these links. I came across this wonderful analysis by Positive Security who focused on urlscan.io and used canary tokens to detect potential automated sources (security tools scanning emails for potentially malicious oinks), and also reached out via email to users. I was able to validate this behavior using canary links as well.

We are Threat hunters! All your links are belong to us!

urlscan Pro allows access for paid users/companies to a broader category of scans, including not just Public but also Unlisted scans.

Unlisted means that the scan will not be visible on the public page or search results, but will be visible to customers of the urlscan Pro platform. We only admit customers to urlscan Pro which are either vetted security researchers or reputable corporations. Source

Cortex-Analyzers from TheHive is an example I would like to outline.

It explicitly uses public:on configuration for scans in urlscan.io analyzer, making the links appear as unlisted even if an account’s visibility in urlscan is set to Private. These can then easily be accessed by urlscan pro users and platforms based on that information.

I would expect much more sensitive information to be prone to leaks in this manner, although the data is not public but only visible to urlscan pro users. I hope they vet the users carefully.

Counts for scans in each category from urlscan.io for last 24 hours:

- 398563

Public - 328147

Unlisted - 955432

Private

I used canary tokens to establish:

- A link submitted to

urlscan.ioasunlisted, was accessed 12 times within 1 hour of submission - A link submitted to

hybrid-analysis.comvia theAPI(not through the browser with explicit warning of them beingpubliccontent), was accessed 10 times within 1 hour of submission - Some IP addresses accessed both unique links submitted to these services simulataneously and use source IP anonymization services.

a list of these IP addresses is here

How to get sensitive links removed?

Urlscan and Hybrid Analysis allow flagging the links to get them removed.

- https://urlscan.io/docs/faq/

- https://www.hybrid-analysis.com/knowledge-base/removing-uploaded-sensitive-files

For Hybrid Analysis, it is a bit more complex. Quoting from their knowledge base link above:

All files submitted to the public Sandbox at https://www.hybrid-analysis.com/ will be searchable and available to the world.

Even if the checkbox “Do not share my sample with the community” is checked, the screenshots and actual report will still be made available. The “do not share” portion only applies to the actual input sample.

Conclusion

This does leave me with mixed feelings. I am quite sure that this problem is here to stay. Perhaps a default of “keep the scans private” would work best, but would defeat the purpose of most of threat intelligence and analysis sharing practices in security community. Be mindful of scan visibility while using these services.

Meanwhile, bounty hunters are using this already to report leaked data to companies directly ;) Hell, one of my submissions to a notable payment processing company even turned out to be a “Duplicate”, so I am definitely not the first one to notice this in the wild.

Disclaimer

If you choose to access some of these links/files from url databases, please be wary of actual malicious files and links. Some of these are just phishing attempts and may contain actual malware. Please use a sandbox environment.